NSX-T and NSX ALB Installations Challenges

I already have working NSX Advanced Load Balancer but because of CSE 4.0 requirement is 21.1.3 i have to downgrade/recreate NSX ALB Cluster (Yes there is no running customer on it before 😀 )

Actually this article will point some other people already find a solution and what i applied but what i want to do hope more people access and find solution easily.

Everything was started when i deleted NSX ALB from NSX-T Manager (using 3.2.1.0.0.19801963) to install 21.1.3.

First problem is about NSX ALB ova file of controller-21.1.3-2p2-9049.ova , it was very strange upload process never over and hang sometimes %99 , sometimes %100 😀

Bakingclouds find the reason which about absent certificate of file, pls read this article. Simply there is no valid certificate and because of that upload never over and the way is find lower version of NSX ALB install it and then upgrade it required one 😀

Another problem, when try to deploy new NSX Advanced Load Balancer Cluster you are getting interesting problems like “Controller VM Configuration Failed” which you can see here.

I don’t know right now for only 3.2.1.0.0.19801963 version this is happening but like a cache or something remains in NSX configuration and need to cleanup related area, two good articles i’m sharing 1, 2

This is it

VM

Ubuntu 22.04 Cloud-Init vSphere vCD Customization Hell

This article about who want to still continute to have cloud-init with Ubuntu 22.04 and want to make customization when using VMware Cloud Director.

This article also could show some very simple things also show what is it ? and why i’m doing this things.

Everything started with How i could make customization with Ubuntu 22.04 without uninstall cloud-init.

Main problem is you will feel like everyting will work after customization but cloud-init will set to DHCP after next reboot kb 71264 !

Please read this links without getting bored 1 , 2 Somehow cloud-init feels need to recustomize vm and because of there is no input that time from VMware cloud-init will try to recustomize network settings and set to DHCP 😀

First link of course from VMware, always need to query about Guest OS Customization, use this link and related os and vCenter versions. For Ubuntu 22.04 support links contents are not enough.

Short before customization what you should do;

Activated root password and root password based logging before execute each commands next and deleted installation time created user. To delete installation time created use after activate root, i rebooted server and then delete it.

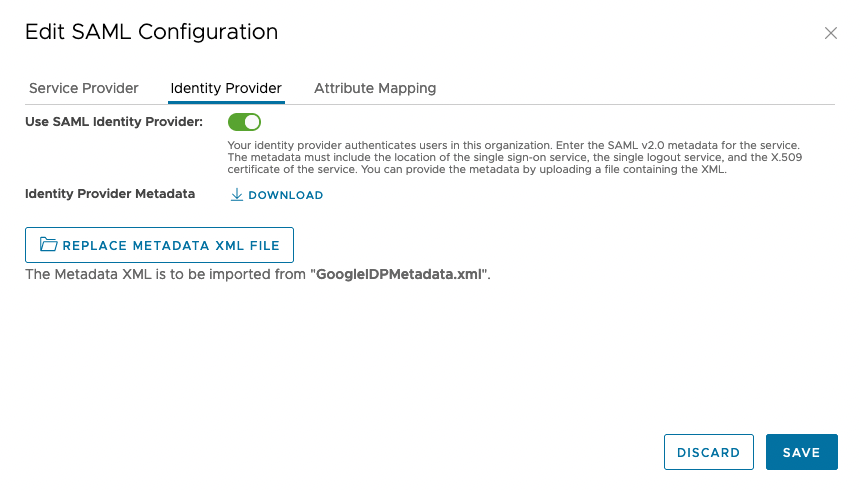

Unauthorized is not Unauthorized sometimes vCloud Director 10.2.2

Actually problem is not about vCD 10.2.2, also problem is not about username and password too !

its very hard to understand the problem or maybe easy to catch but must be careful

Guilty is max header 😀 and myself maybe you 😀

What happened ; when i couldn’t see rights bundle correctly from provider UI somehow i clicked SAVE maybe and vCD overwright and takes all rights from “Default set of tenant rights” and i started to get “Authentication Error”

But interesting thing is from event i can see the login successes.

First i fixed haproxy

To tune haproxy add parameters under global section

tune.bufsize 65536

tune.http.maxhdr 1024

Not: I tried HTTP/2 but something goes wrong and back to 1.1 again maybe someone can try and comment out in this article.

Then checking 9.5 release note and set max header for it but don’t forget before check related values if its not correct then apply.

Without this you can see “HTTP 502 errors” when you inspect the page

Thanks for developers 😀 no any warning for “Hey , you will overwrite all rights , are you sure ? ” or in debug log say something instead of Authentication Error ” permission or no right error” indicated.

Finally using via api need to resend all rights back.

Not for you, always backup your rights and roles at the startup , when you made change too maybe one day you could need it to set it back via lovely swagger.

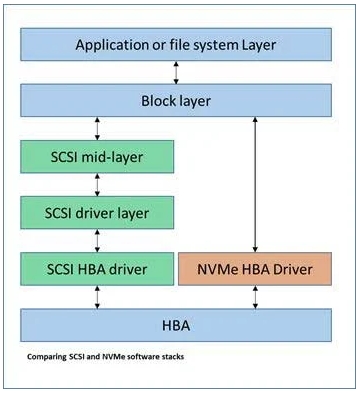

NVMe-oF

Hızlıca nedir bu NVMe-oF , terimler ne, neden kullanılıyor , hayatımızda ne değişeceğini geleneksel yapılar üzerinde inceledim ve VMware kullanıcıları için hpp ve birkaç sorun gidermek için komut örnekleride ekledim …

NVMe sunucularımızdaki SSD’leri PCIe yoluna bağlayabilmek için kullanılan bir arabirim ve protokolden ibaret.

NVMe’nin adreslemek istediği iki şey var, biri hız diğer ise düşük tepki süresi. Bunu adresleyebilmek için artık hem SATA, SAS gibi interface’lerden kurtulmak hemde SCSI gibi eski ve paralel çalışmaya uygun olmayan bir komut işleme ve kuyruk yapısından kurtulmak gerekiyordu. NVMe’i çok kollu bir robot’a , SCSI’i ise tek kolu robota benzeten yazılar var, ki aslında bunun biz firewall, load balancer, sanal makinelerin network kuyrukları gibi envayi çeşit örnek ile açıklayabiliriz, olay elimizde hızlı bir medya var , bu medya yapabileceği çok iş var bunun için ona çok daha fazla iş yüklemek gerekiyor SCSI bunu yapamadığından NVMe çıka geliyor ve paralel çalışabilecek bir kuyruk yapısı ve aynı anda çalıştırabileceği disk komut setleri sayesinde aynı zamanda yapısı gereği daha az katmandan geçerek direkt erişim sağlaması nedeniyle olayı hızlandırıyor.

NVMe etki alanı bulunduğu fiziksel sunucu üzerindeki maksimum disk sayısı, disk boyutu ve erişirliğin ancak yerel olaması ile sınırlı.

Daha fazla toplam kapasite, daha iyi doluluk oranları, paylaşımlı erişim ve storage dünyasından aşina olduğumuz snapshot, dedup gibi servisler için farklı bir yaklaşım gerekti buda NVMe ‘i bir fabric üzerinden taşıma ve geleneksel dediğimiz storage’lar için uyarlanmasını gerektirdi.

NVMe taşıma için iki tip fabric mevcut, bir RDMA diğeri ise Fiber Channel.

RDAM fabric diyince önünüze ROCE ve iWRAP gib ethernet tabanlı bağlantı tipleri gelecek.

FC fabric deyince ise NVMe-oF gelecek bildiğimiz SAN.

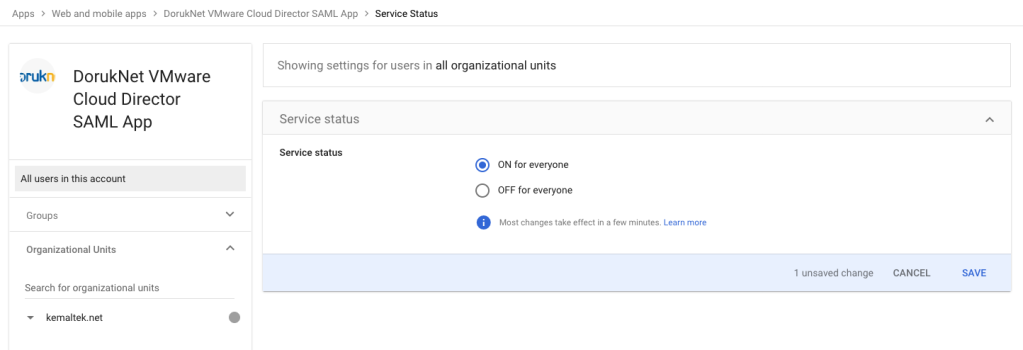

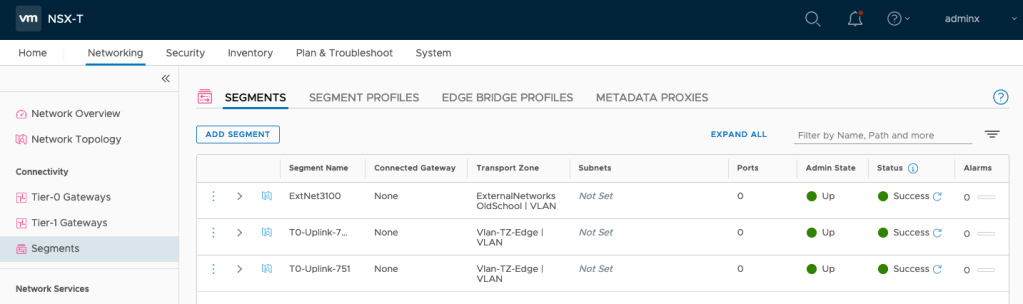

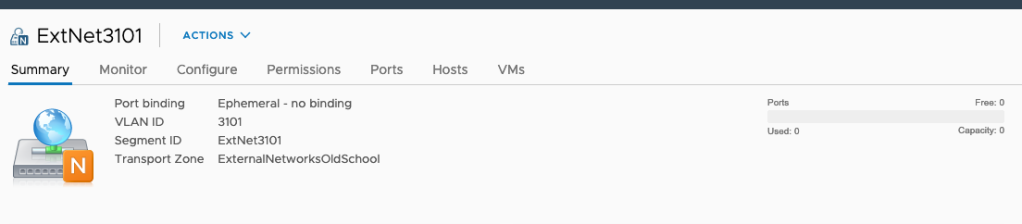

NSX-T Backend and import Segments like old days external networks to OrgvDC

When i saw there is no direct network for NSX-T backend i little surprised, thing that where gone old port groups what we were adding before under External Networks and then assign to OrgvDC

Good news if still using NSX-V backend its allowed, you can continue until migrate it to NSX-T

Come to NSX-T again, still there is a way to do it but with different style ;

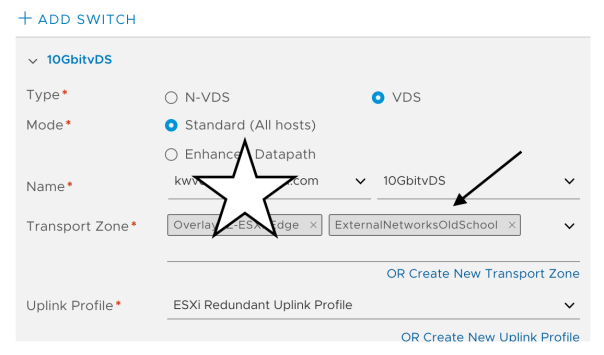

New object name is a segment, I mean instead of create port group via vCenter we will start to create a segment which will be shown NSX-T managed port group, all other settings like uplink profile, modes and other things will be managed via NSX-T Manager.

Go to Segments from Networking and click ADD SEGMENT

Don’t forget related Transport Zone also need to be assigned related vSphere or vCenter vSphere Nodes which will help under what distributed switch related port group will be created.

Not* I’m using vSphere 7 with vDS integrated NSX-T

Set only name of the segment, important part is you have to choose Transport Zone and set VLAN ID.

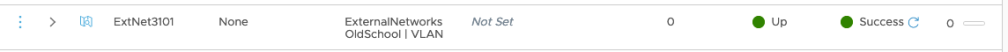

Wait status turn to green ….

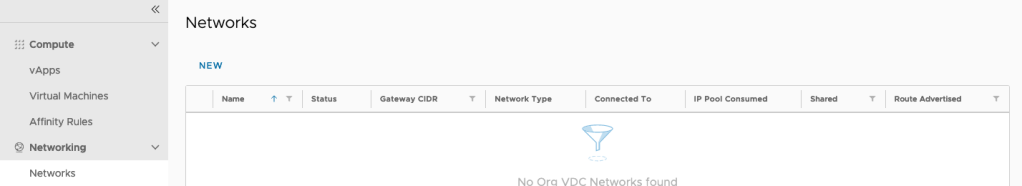

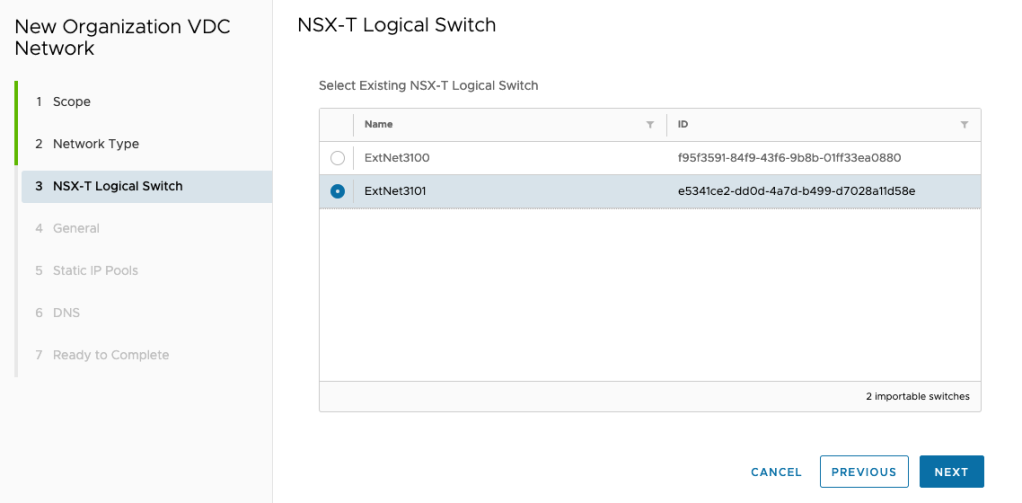

Then via vCD Administrator tenant portal you can import this segment to related organization again like old days …

NEW –> Current Organization –> Choose Imported

Choose what you created segment before

Then set gw , ip range and dns like before …

Go to vCenter, you will see the related segment like a port group but its NSX-T managed segment ….

Happy networking 😛 😀

VM

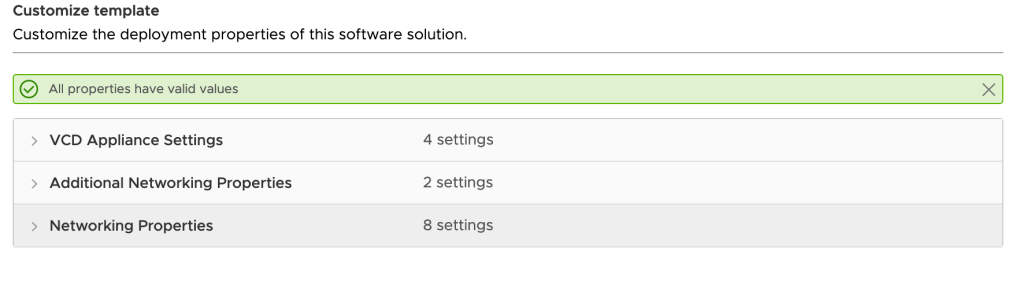

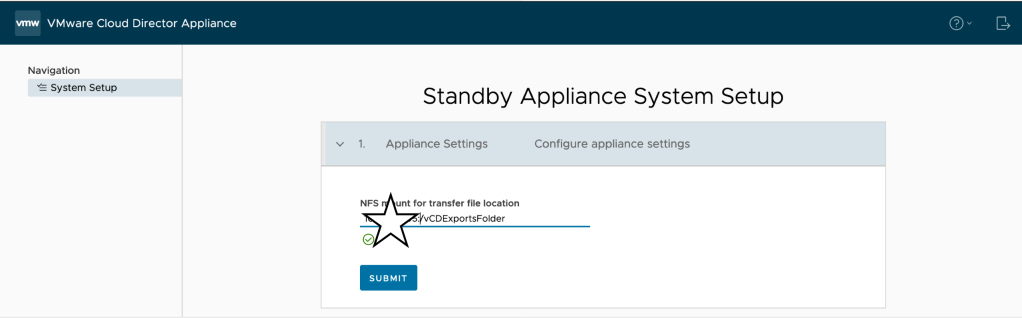

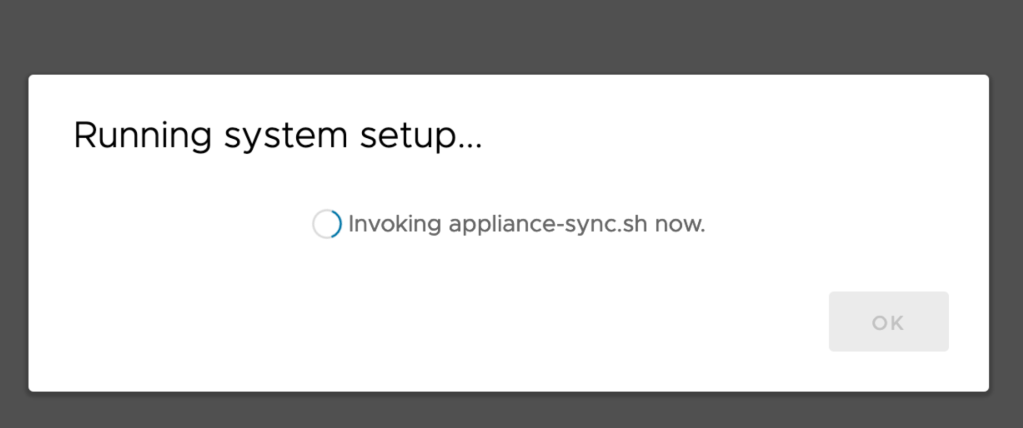

Better Standby Appliance Setup with vCD 10.2

First I little confused when i couldn’t see “VCD Configure” section and questioned where i will set my nfs server settings 🙂

Good thing is, its really perfect set ntp server and IP address and next 😀 another setup screen will wait you after OVF deployment over and you will set nfs server and path there

SUBMIT and wait for DB sync

its over …

Check health, its important because if you set automatic failover its mode will be (as for me) INDETERMINATE

Set it automatic via api again, if you need a help to set it check this out.

🙂

VM

vCD Upgrade or Patch after 10.1 :(

This story started when i gently f*ucked my vCD HA 3 nodes version upgrade from 10.1 to 10.2

My database availability mode was AUTOMATIC 😀

Because of it, i did not read prerequisites good enough, executed Guest OS Shutdown for vCD Primary node and I felt like perfect because i already got the backup and easy will take snapshot and continue to upgrade but after reboot saw that I have two primary, one is failed state (of course its my old primary db)

I expected that I have to do something to fix this with like re-register primary node as a secondary or/and use repmgr node rejoin (also saw something from Stack Overflow) but because of its in production and no need to take risk, i fallowed the way ;

Unregister vCD Node from repmgr : (Here because of this node remain primary use primary flag to remove)

/opt/vmware/vpostgres/current/bin/repmgr primary unregister –node-id=7590 -f /opt/vmware/vpostgres/current/etc/repmgr.conf

Then I deleted related vCD node and create new one as a standby and join to the cluster again, after looks like everything is fine, we are in game again …

Read the rest of this entryAuto Deployment of NSX-T 2nd and 3rd manager deployment failed/hanged

Actually this is very stupid situation maybe you could face with this issue

After first NSX-T 3 Manager deployment 2nd and 3rd deployments are done by NSX-T Manager UI. Somehow when trigger auto deployment installation hang %1.

When I check from vCenter, yes there is an event for deployment but its 0% and time out after ..

After some investigation i saw that different dns servers are used for vCenter and NSX-T Manager and somehow DNS server which used for NSX-T Manager have zone record for domain and because of that zone do not have related records NSX-T Manager couldn’t resolve FQDN of vCenter and OVF upload never over and timed out …

After fix dns issue problem has been resolved …